[Composed 1/4/2026]

I'll be honest: I was not excited to visit the aquarium.

My vacation philosophy leans hard toward the serendipitous and the real. I'd

rather crouch over a tidepool, squinting at whatever tiny creature the tide

left behind, than stand in front of a tank engineered to impress me. The

tidepool requires patience and luck. The aquarium is prepared, packaged,

ready-made wonder. I prize the unprepared version.

The thing is, that's not always the right call. And the

Maui Ocean Center is Exhibit A.

We arrived at 2pm on a day already packed with

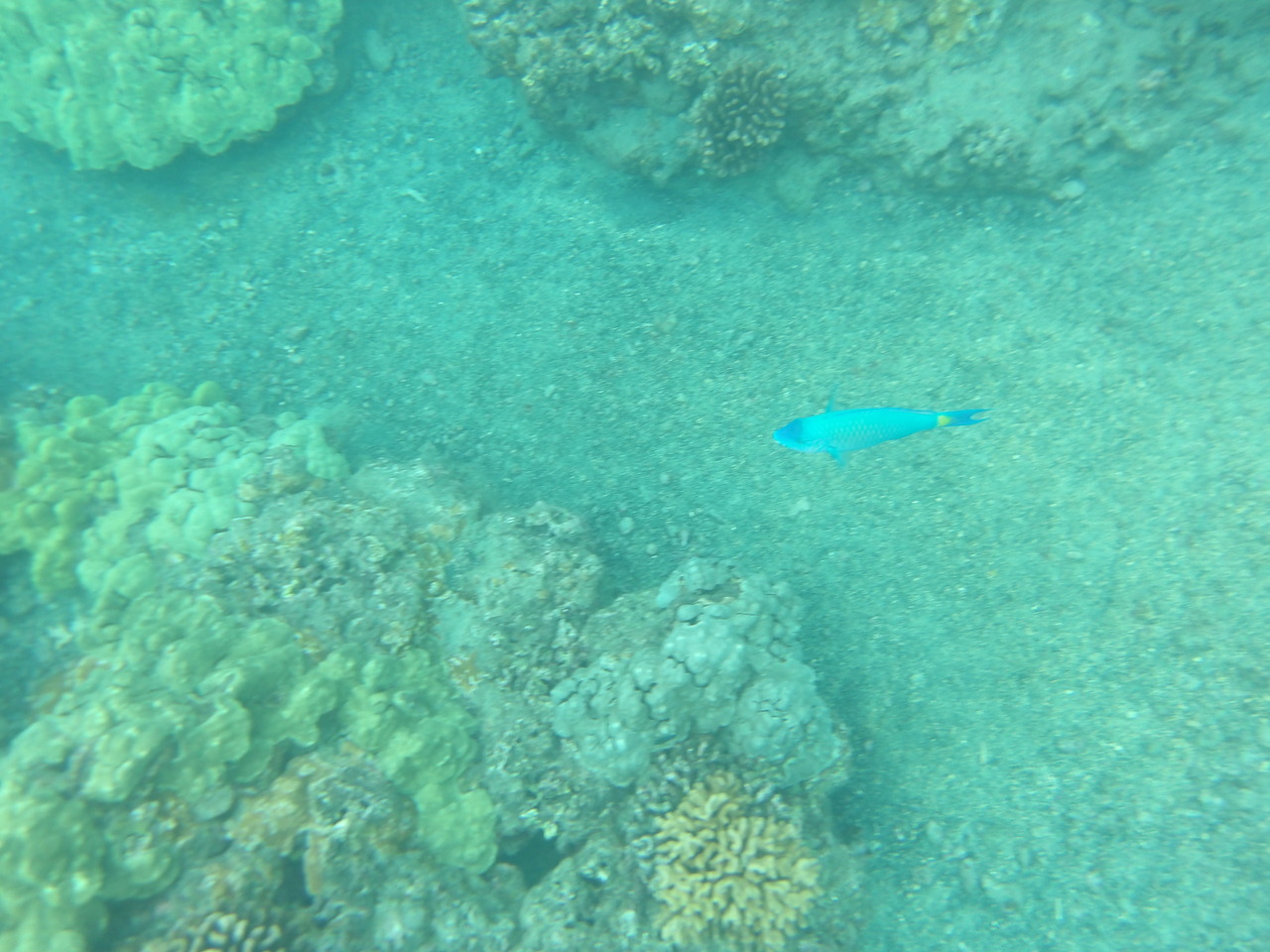

tidepools

(which were a sealife bust!),

a

hula show and a wildlife refuge. The aquarium closes at

5pm. I'd pushed it off as long as I could. It was now or never.

The Maui Ocean Center is smaller than

Baltimore's National Aquarium, which I've

visited a handful of times and consider a gold standard. Size turns out to matter

less than execution. A world-class art gallery doesn't need to be the Louvre.

What it needs to do is make you stop, look, and feel something. The Maui

Ocean Center does exactly that. If you walk out unmoved, I don't know what

to tell you.

The Octopus

The highlight of the standard exhibits wasn't the sharks or the coral — it

was an octopus.

A keeper had her arm in the tank, and the octopus was moving across her hand

and forearm. As it moved, it changed color. Not slowly, not subtly — in real

time, pattern shifting across its skin like someone flipping through channels.

Red, then mottled brown, then almost transparent-gray, then red again.

I knew octopuses could do this. Knowing it and watching it happen two feet

away are very different experiences.

Sharks

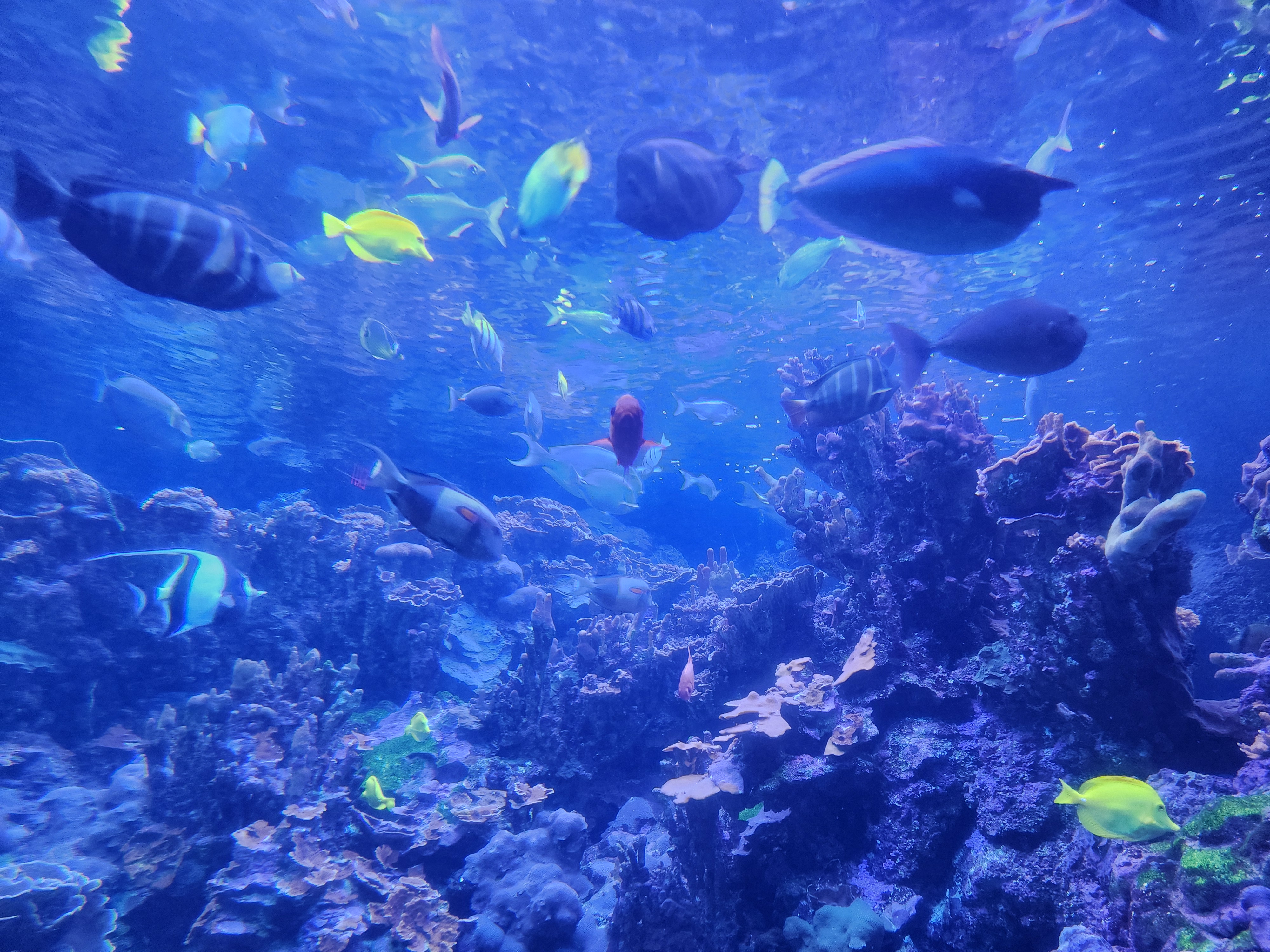

The main tank is a multi-story reef packed with sharks, rays, and

everything else — adjacent to a tunnel that lets you walk through as

sharks glide overhead. Classic aquarium setup. Standard or not, it's

breathtaking.

More thought provoking was the shark presentation. Most of it

covered familiar ground, but one insight stuck: sharks aren't

malicious. They don't hunt for sport. A well-fed shark has no

biological reason to expend energy chasing something it isn't going

to eat. Before any diver enters the water, the keepers feed the

sharks — thoroughly. A sated shark is an indifferent shark.

The presenter's point: every other predator stops when it's full.

Only humans eat past satiation by choice. That struck a chord. By the

end of the afternoon, it came back to me.

She Becomes He

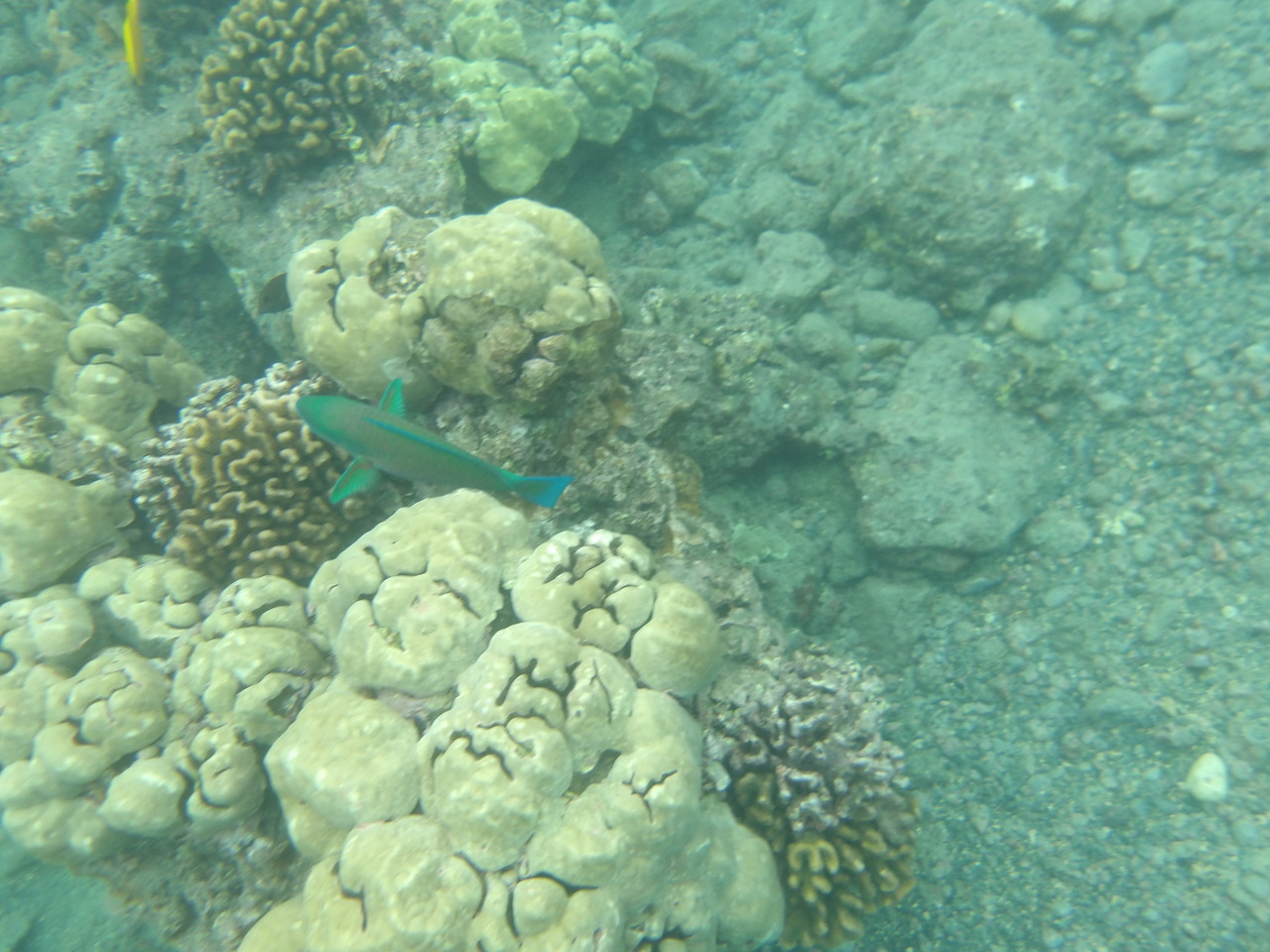

One display described a Hawaiian reef fish — the

wrasse

— with an unusual life strategy. The fish are born female. When the

dominant male in a group dies, the largest female undergoes a full

sex transition and takes over the male role completely.

Just try telling Mother Nature something is impossible.

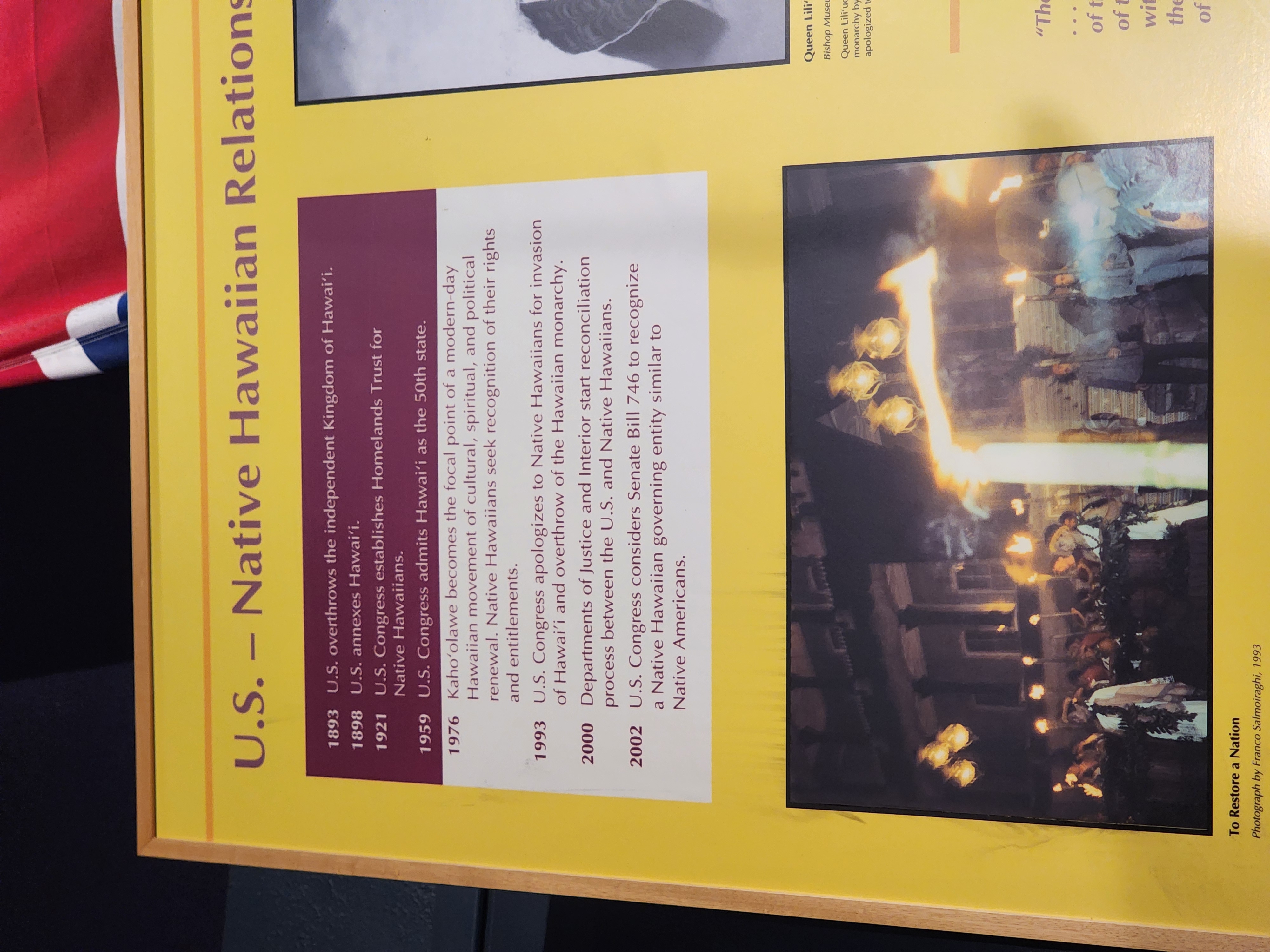

A History Lesson I Wasn't Expecting

Between the tanks, the aquarium has displays on Hawaiian history and culture.

I stopped at one with the understated title: "U.S. - Native Hawaiian Relations." It gave a timeline of the

annexation

of Hawaiʻi. I realized that while I knew Hawaiʻi was a state, I'd never asked how it became one.

The short version: in 1893, a group of American and European businessmen —

backed by U.S. Marines who had

already

landed the day before — overthrew

Queen

Liliʻuokalani and dissolved the Hawaiian Kingdom. The driving force

wasn't ideology or democracy. It was sugar. Hawaiian sugar had enjoyed

preferential access to American markets; when that advantage was eliminated,

annexation became the only way to restore it. The businessmen wanted back

into the market. The military wanted Pearl Harbor. The math wasn't

complicated.

Queen Liliʻuokalani surrendered under formal written protest, explicitly

stating she was yielding to the "superior force of the United States of

America." President Cleveland investigated, concluded the coup was illegal,

and tried to restore her. He couldn't. His successor finished the job:

Hawaiʻi was

annexed

in 1898 via a joint resolution — specifically chosen over a formal treaty

because a treaty required two-thirds of the Senate, and they didn't have the

votes.

More than

38,000

Native Hawaiians — out of a population of roughly 40,000 — signed

petitions against annexation. At the ceremony, the Royal Hawaiian Band wept.

Most Hawaiians refused to attend.

As I pondered this heartbreaking origin story, I realized that on the same day we visited the aquarium we had woken to the news that the United States had

launched

a military operation to remove the leader of Venezuela — a

sovereign nation sitting on top of enormous oil reserves. Trump's

own words, days

later: "The Oil is

beginning to flow." Sugar. Oil. The details had changed, but

that insatiable appetite had struck again.

I like to think we're more evolved than our predecessors. That we

understand sovereignty now; heck we formally apologized for our

actions in 1993. We're better than this. And yet, waking to the news

that America had invaded a country whose resources it eagerly planned

to exploit, while standing in a country that had suffered the same

fate — it was hard to make that case.

The Quirkiest Find on Site

Outside, across from 'Turtle Lagoon' and in 'Nursery Bay' sat an

object that had no obvious business being there.

Torpedo-shaped. Six to eight feet long. Small stabilizing fins. Vintage in

aesthetic — the kind of thing that looks technologically serious but also

clearly old, like a prop from a Cold War thriller.

The placard explained: a Soviet submarine communication device. A sub running

at depth would tow it on a long cable; the buoy would skim the surface,

letting the sub transmit and receive radio signals without surfacing and

becoming a target. Single-use. Disposable. It had washed ashore in Hawaiʻi,

and nobody knew what it was.

First off, what a clever solution to a baffling

challenge. Submarines that surface are a far easier target than one

at depth. But, radio signals can't be sent or received at depth, so

you're literally and metaphorically in the dark. This fix, surface a

cheap, almost drone-like middle-man, neatly solves the problem. The

sub doesn't have to expose itself, yet radio signals can find a way

into the murky depths. Genius.

If that had been the deal with that exhibit, I'd have been

impressed. And yet, there's a whole other level of cool with this

artifact. When I went home and researched this artifact, the first

link that came up was

this Reddit

thread. Turns out, someone found the thing on the beach, posted

a photo to

r/whatisthisthing, and the internet collectively identified it. A

cross-post

to r/WarshipPorn confirmed it as Soviet naval hardware. The aquarium

didn't conduct archival research or consult a naval historian. The cherry on top: they cited the

Reddit thread on the museum placard.

I respect that enormously. The answer was right, the source was credited, and

a piece of Cold War hardware found a home in an aquarium reef tank because

some guy posted a picture and the internet knew what it was. Distributed

knowledge working exactly as it should.

The Blue Whale

The aquarium charges extra for the 3D film, and there's a wait between

showings. We paid and waited.

The waiting area primes you with text panels covering everything the film is

about to show: blue whale song, calving behavior, the complexity of whale

communication, the emerging argument for recognizing whales as something

approaching persons — the term "non-human person" appeared, which I'd never

encountered before. By the time the doors opened, I'd read the whole film.

It didn't matter. The film was breathtaking anyway.

The kids seated near us kept standing up, reaching toward the screen as the

whale came at them. You can know everything that's coming and still be moved

by how it arrives. The Maui Ocean Center figured out something a lot of

filmmakers haven't: 3D works when the thing filling the screen is something

you already care about. A blue whale at full scale, emerging from the dark,

is one of those things.

We left as they closed the doors at 5pm. Three hours turned out to

be exactly right — enough to be moved, not so long you get

complacent. I'm glad I didn't skip it.

Lesson learned: serendipity is great. But sometimes the prepared walls have

something real inside them. You just have to be willing to walk in.