But Why?

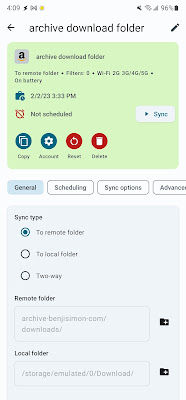

I love the idea of downloading a large area's worth of USGS maps,

dropping them on a Micro SD card, and keeping them in my 'back

pocket' for unexpected use. Sure, Google and Back Country

Navigator's offline map support is more elegant and optimized, but

the there's just something reassuring about having an offline

catalog at your fingertips.

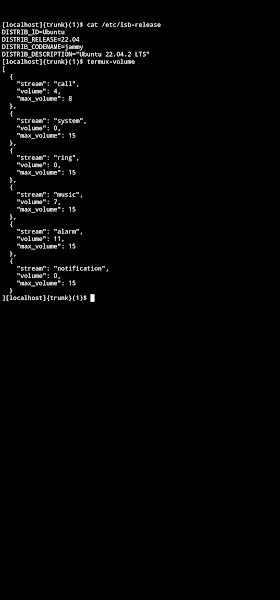

Getting and downloading maps in bulk is easy enough to do. For example, I can

ask my USGS

command line tool for all the maps that define Virginia:

$ usgsassist -a topos -l "Virginia, USA" | wc -l

1697

The problem is that each map is about 50 megs. I confirmed this by

looking at the 4 maps that back Richmond, VA:

$ wget $(usgsassist -a topos -l "Richmond, VA" | cut -d'|' -f3)

...

$ ls -lh

total 427440

-rw------- 1 ben staff 53M Sep 23 00:20 VA_Bon_Air_20220920_TM_geo.pdf

-rw------- 1 ben staff 56M Sep 17 00:17 VA_Chesterfield_20220908_TM_geo.pdf

-rw------- 1 ben staff 48M Sep 23 00:21 VA_Drewrys_Bluff_20220920_TM_geo.pdf

-rw------- 1 ben staff 51M Sep 23 00:22 VA_Richmond_20220920_TM_geo.pdf

Multiplying this out, it will take about 84 gigs of

space to store these maps. With storage space requirements like

these, I'll quickly exhaust what I can fit on a cheap SD card.

This begs the question: can we take any action to reduce this disk

space requirement? I think so.

But How?

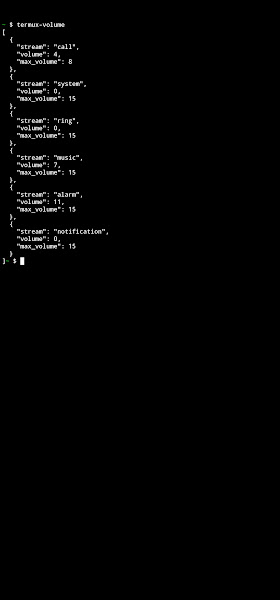

Inside each USGS topo is an 'Images' layer that contains the

satellite imagery for the map. By default, this layer is off, so it

doesn't appear to be there:

But, if we enable this layer and view the PDF, we can see it:

$ python3 ~/dt/i2x/code/src/master/pdftools/pdflayers \

-e "Images" \

-i VA_Drewrys_Bluff_20220920_TM_geo.pdf \

-o VA_Drewrys_Bluff_20220920_TM_geo.with_images.pdf

My hypothesis is that most of the 50 megs of these maps go towards

storing this image. I rarely use this layer, so if I can remove it

from the PDF

the result should be a notable decrease in file size and no change in functionality.

But Really?

To test this hypothesis, I decided I'd extract the image from the

PDF. If it was as hefty as I thought, I'd continue with this

effort to remove it. If the file isn't that large, then I'd stop

worrying about this and accept that each USGS map is going to

take about 50 megs of disk space.

My first attempt at image extraction was to use the

poppler PDF tool's

pdfimages

command. But alas, this gave me a heap of error messages and didn't

extract any images.

$ pdfimages VA_Bon_Air_20220920_TM_geo.pdf images

Syntax Error (11837): insufficient arguments for Marked Content

Syntax Error (11866): insufficient arguments for Marked Content

Syntax Error (11880): insufficient arguments for Marked Content

Syntax Error (11883): insufficient arguments for Marked Content

...

Next up, I found a useful snippet of code in this

Stack

Overflow discussion. Once again,

PyMuPDF was looking like it was

going to save the day.

I ended up adapting that Stack Overflow code into

a custom

python pdfimages script.

When I finally ran my script on one of the PDF map files I was

surprised by the results:

$ python3 ~/dt/i2x/code/src/master/pdftools/pdfimages -i VA_Drewrys_Bluff_20220920_TM_geo.pdf -o extracted/

page_images: 100%|██████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 174/174 [00:18<00:00, 9.51it/s]

pages: 100%|████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████████| 1/1 [00:18<00:00, 18.31s/it]

$ ls -lh extracted/ | head

total 361456

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-100.png

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-101.png

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-102.png

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-103.png

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-104.png

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-105.png

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-106.png

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-107.png

-rw------- 1 ben staff 1.0M Feb 8 07:22 VA_Drewrys_Bluff_20220920_TM_geo_p0-108.png

$ ls extracted/ | wc -l

174

Rather than extracting one massive image, it extracted

174 small ones. While not what I was expecting, the

small files do add up to a significant payload:

$ du -sh extracted

176M extracted

Each of these image files is one thin slice of the satellite

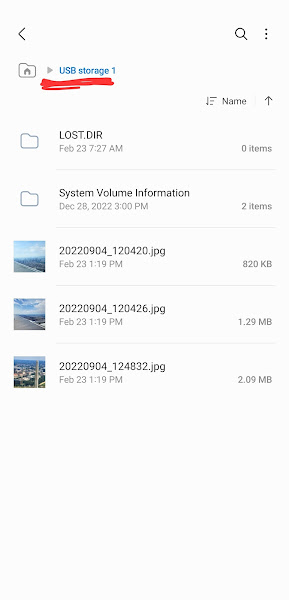

photo. Here's an example:

I find all of this quite promising. There's over 170 Megs worth of

image data that's been compressed into a 50 Meg PDF. If I can remove

that image data, the file size should drop significantly.

Next up: I'll figure out a way to remove this image data, while still

maintain the integrity of the map files. I'm psyched to see just how

small these file can be!